The memory wall problem

And a short reflection on how I need to write more!

1. My writings should be quantity over perfect quality

The problem I have with blogging/writing in general is that I really really want my writing to be perfect. I think this is a bad thought to have, because:

It would benefit myself to commit my thoughts to the public domain for various reasons personally and professionally. It’ll also compress the OODA loop of my writings.

It would benefit the people who read my work to get a sense of the ideas I’m thinking about even if they aren’t fully ironed out. As far as I can tell, people seem to care more about the novelty of ideas rather than the strength of the argument. I don’t know if that is good or bad, but oh well!

With that said, here are the things I aim to commit to:

For most pieces, finish the writing in under an hour or so. This piece was done in ~50 minutes.

Publish at least once a week. If I don’t have any good ideas, I won’t publish.

That means more of my future writings going forward will have a casual nature to it, like this post right now.

I will still write very detailed essays or thoughts on random subjects, but those will come after I’ve already written shorter and faster-to-release pieces on them.

I’ll include what I read or watched for the week and note any interesting observations.

Anyways, moving on, here’s a short excerpt regarding the “memory wall” problem in AI training and inference that I wrote for another publication. Made some edits and added some more interesting facts (especially in the footnotes).

2. Memory is the LLM bottleneck, not compute!

In February 2024, Sam Altman posted on X that OpenAI generates about 100 billion words per day. A year later, it was revealed that ChatGPT users send 2.5 billion prompts a day. If we basically assume that the average prompt is 200 tokens, that means within a year, daily word generation has increased by ~3.75x. I suspect hardcore power users (such as myself with 800+ token prompts) will drive this number up closer towards 5x, if not more.

Google also announced in April 2025 that it was processing 480 trillion tokens per month (or roughly 12 trillion words a day), up from 9.7 trillion tokens per month a year ago. That’s 50x growth!

To sustain these growth levels, more compute is needed. Consequently, OpenAI announced in January 2025 that it was building a 5GW AI datacenter for $500 billion. Not to be outdone, Zuckerberg announced that Meta would also build Hyperion — a 5GW AI datacenter — in July 2025, which follows an earlier 1GW AI datacenter deployment called Prometheus in Ohio.1

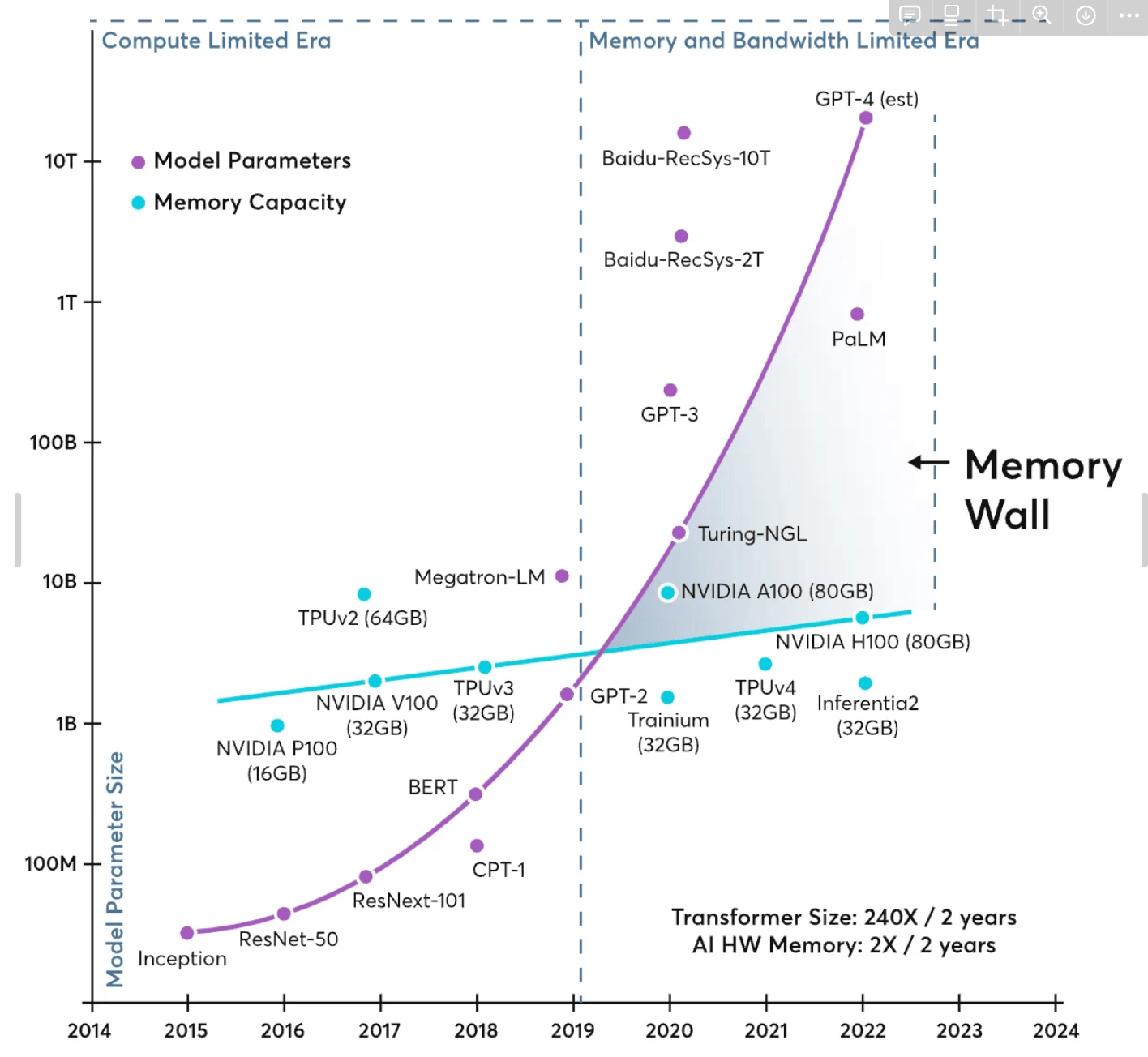

Yet, despite all the hype around Nvidia, GPUs, and other AI accelerators, it is interesting to note that compute is no longer the bottleneck. As the AI infrastructure buildout race has revealed, compute capabilities are continuing to scale thanks to Nvidia, as well as other notable AI accelerator startups like Cerebras and Groq, but data movement and bandwidth have not scaled as fast. It has been well-established that the rate of memory bandwidth improvements has increased much slower than raw compute (in FLOPs) for years. Known as the “memory wall” problem, LLMs are now bottlenecked by how fast data can transfer between the computation and memory unit of a chip. The maximum rate that this data transfer can occur is the chip’s memory bandwidth.

In a simplified sense, LLMs mathematically depend on massively parallelized matrix multiplications (MatMuls) to operate. However, during MatMul operations, a GPU (or other type of AI accelerator) must read both matrices from memory, perform the computation, then save the result back to memory — and because intra-GPU data movement is not instantaneous (limited by the speed of signal propagation), this takes a certain, non-zero amount of time.

Furthermore, because SOTA models have billions or even trillions of parameters, a model must be “cut up” and spread across multiple GPUs. Consider the open-source LLaMa 3-70B model (a medium-sized model) running on a typical Nvidia H100 GPU, which has 80GB of on-chip memory. Just to load the model onto the chip requires roughly 70GB of memory (at quantized INT8 weights; using FP16 weights alone requires roughly 140GB).2 Note that this doesn’t include additional overhead from the KV-cache or user-inputted prompt tokens! Thus, even this medium-sized model needs to be sharded across multiple H100 GPUs via tensor or pipeline parallelism. Collectively, inter-GPU data movement is a necessity to run a model, in which GPUs share the partial MatMul results between each other so it can work in synchrony.

All of this results in GPUs being idle while waiting for data transfers at training and inference time. Several papers have found that large-batch inference results in 50% of GPU cycles to remain idle due to data-fetch delays. Communication overhead has dominated the runtime of these models, where terabytes of data needs to be exchanged for a single forward-pass step during inference. If LLMs continue to get larger, one must surpass the current memory wall.3

3. Recommended reads

1. Scientific philosophy and a model for how science progresses

Related to my longer-form piece on accidental inventions, spurring scientific revolutions, and evolutionary versus revolutionary technologies. Good read. Similar to this piece in my Obsidian: the structure of metascientific revolutions.

It is interesting to note that both authors claim that real scientific advancement occurs when old paradigms about the world can no longer explain what scientists see. Relevant to today, I think this could be cautiously applied to AI research and compute scale up, where old paradigms of the world (GPUs, memory interconnects) can no longer sustain what scientists are attempting to accomplish. We might see a real change in technology regarding the compute space, and we are already seeing this play out.

A question I have is whether this is the end of science? Clearly this does not seem to be true. I would imagine there are a lot more scientific discoveries to be found. However, both articles do claim that science is getting harder, that there is a sufficient burden of knowledge, and that science investment is leading to less downstream impact. Is there an inflection point where the resources required to conduct breakthrough sciences are too monumental to continue? Are there any pieces on this?

2. The biosecurity thesis

Tangentially related to what I am tinkering with and am talking with people in the space now. My take is that biosecurity is more of a government defense problem than a viable commercial problem. There's probably a business model that can be innovated on here, but I haven't thought that much into it.

Related: defense-forward biosecurity and the bioweapons are coming.

3. Physical turing test

Interesting perspectives and framings on scaling robotics, though this seemed to contradict the conversation I had with Ghazwa Khalatbari, who recently published: The Age of Embodied Intelligence: Why The Future of Robotics Is Morphology-Agnostic.

Apparently, Prometheus and Hyperion will use enough energy to power millions of homes. One Meta data center campus in Georgia is already causing the water shortages for some residents. I am still evaluating whether this is true or is a meaningful concern and will read this piece next.

This can be easily calculated. If assuming half-precision FP16 for inference, then each parameter is 2 bytes, so 70B x 2 bytes = 140GB of memory usage. That’s for model weights only. For KV cache size per token, you can just this calculator (the formula is also simple). This is for inference only. You typically train at full-precision (though this is changing), requiring 4 bytes per parameter, and you need to account for the optimizer states and activations. Thus, training a LLaMa 3-70B model requires ~10 H100s, minimum, but it’ll probably take years to converge to an acceptable loss.

Memory demand will explode. At inference time, reasoning models will output thousands of tokens, and based on the transformer architecture, the attention heads will accumulate and the KV-cache will grow quadratically. Basically, if you go from using 10,000 token to reason to a 100,000 token reasoning chain, your memory usage grows by more than 10X. Hint hint to someone recently asking about my stock picks, memory semiconductors were cheap when irrational market fears exploded over DeepSeek’s GRPO/MHLA architecture and using less memory/compute. SK Hynix is up 50% YTD for starters.

Would love to see a longer form article on this!